Rabin Adhikari

रबिन अधिकारी

Campus E1 5

66123 Saarbrücken, Germany

Hi! I’m Rabin, a Master’s student in Data Science and Artificial Intelligence at Saarland University. Part time, I’m also working as a Research Assistant at the Max Planck Institute for Software Systems (MPI-SWS), where I’m part of the Machine Teaching group led by Prof. Adish Singla.

My main focus these days is on trustworthy AI—I’m interested in building systems that are more robust, reliable, and aligned with how people actually use them. Before joining MPI-SWS, I worked as a Data Scientist (Working Student) at QuantPi, where I evaluated the behavior of large, production-grade models from NVIDIA. I looked into their biases, robustness, and overall performance in real-world deployment settings.

Before coming to Germany, I was a Research Assistant at NAAMII in Nepal, where I worked on a mix of machine learning domains, including NLP, multimodal learning, and medical imaging. I’ve also had hands-on experience as a full-stack developer, which keeps me grounded in the practical side of things.

Outside of work and studies, I enjoy hacking on personal projects, diving into research papers, or just chatting about the quirks and challenges of modern AI systems.

If you’d like to know more about my background, my CV is available here.

News

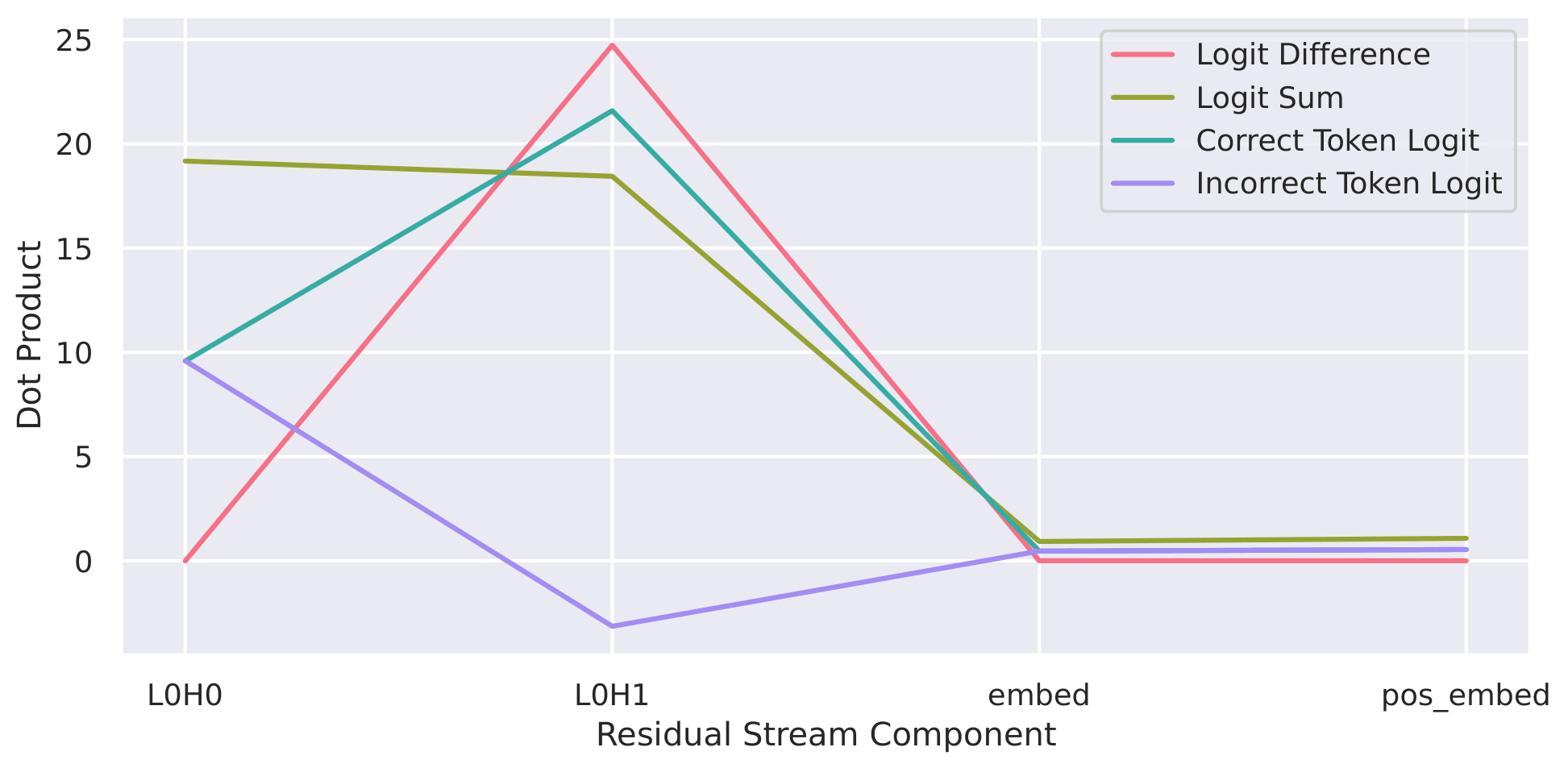

| Apr 25, 2026 | Emergence of Minimal Circuits for Indirect Object Identification in Attention-Only Transformers has been accepted at the Student Research Workshop of ACL 2026 in San Diego, USA. |

|---|---|

| Feb 20, 2026 | Tutoring Machine Learning Core Lecture for the Summer Semester 2026 under Prof. Isabel Valera at Saarland University. |

| Oct 02, 2025 | I have been awarded the Deutschlandstipendium, a prestigious merit-based scholarship funded jointly by the German Federal Ministry of Education and Research (BmBF) and private sponsors. Sponsored by AMGEN GmbH for the funding period 2025/26. |

| Apr 01, 2025 | Started working as a HiWi at Max Planck Institute for Software Systems (MPI-SWS) under Prof. Adish Singla to research uncertainty quantification in LLMs. |

| Nov 15, 2024 | Joined QuantPi as a Data Scientist (Working Student) to analyze the robustness of AI models in production. |

Latest Posts

| May 07, 2021 | Training of Word Embeddings |

|---|---|

| Oct 30, 2020 | Importing OpenVPN Configuration |

Selected Publications

- MICCAI (Poster)

VLSM-Adapter: Finetuning Vision-Language Segmentation Efficiently with Lightweight BlocksIn Medical Image Computing and Computer Assisted Intervention - MICCAI 2024 - 27th International Conference, Marrakesh, Morocco, October 6-10, 2024, Proceedings, Part IX, Oct 2024

VLSM-Adapter: Finetuning Vision-Language Segmentation Efficiently with Lightweight BlocksIn Medical Image Computing and Computer Assisted Intervention - MICCAI 2024 - 27th International Conference, Marrakesh, Morocco, October 6-10, 2024, Proceedings, Part IX, Oct 2024